Building a web service with Node.js in Visual Studio Part 4: Node.js web service basics

November 13, 2014 Leave a comment

Introduction

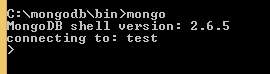

In the previous post we practiced some basic MongoDb queries that will be useful in our project later on. In this post we’ll start looking at the basic structure of a Node.js web service.

The goal of this post is to go through some code basics in Node.js so we’ll take an easy start.

Keep in mind that Node.js supports asynchronous code execution by default. You don’t need to add any special code for that. In .NET we can add support using the await-async pattern but currently the starting point for an MVC.NET project template is synchronous code execution.

A consequence of asynchronous code execution in Node.js is the ubiquity of callbacks. We’ll see callbacks passed into a large number of methods. The presence of callbacks will make sure that the available threads can be put to work and serve requests and methods without delegating all work to a single thread. The end result will be a better utilisation of threads and CPU. We saw similar behaviour in .NET in the post on async-await referenced above.

Selecting the template

We installed the ingredients necessary for building a Node.js project in Visual Studio. Open Visual Studio 2013 Pro and let’s see which template can be useful for us:

We can immediately rule out all Azure applications. We don’t have any existing Node.js apps so option #2 can be ruled out too. There are two templates that install the “Express” framework. Recall that Express is a web application framework upon Node, so it’s something like ASP.NET running on IIS. However, those templates implement views and a lot more code that we’d need to discuss. Since we’re building a web service we’d need to start by removing code. I’d like to avoid that. Also, adding new code and providing an explanation is easier at this beginning level than saying “we don’t need that, you can erase it”.

So we have two remaining “blank” applications: a console and a web app. We saw the console app in the first post of this series. We could actually go with that template and build upon it from scratch. However, we’ll take the other template instead so that we have something to start with at least. It won’t install Express.js but we can install it ourselves – it will be a good occasion to take a look at the Node Package Manager tool.

Select the “Blank Node.js Web Application” template, call the project CustomerOrdersApi and press OK.

Starting point

Most elements look familiar from the first part. We have a server.js which is the entry point to the application, similar to Program.cs in a .NET console app. It currently has the following contents:

var http = require('http');

var port = process.env.port || 1337;

http.createServer(function (req, res) {

res.writeHead(200, { 'Content-Type': 'text/plain' });

res.end('Hello World\n');

}).listen(port);

Even before going through this code let’s run it. Press F5 as you normally do in Visual Studio. This should open a browser, navigate to http://localhost:1337/ and that will print “Hello World” as plain text on the screen.

Let’s consider the bits of code in server.js which is the entry point of the application, much like Global.asax.cs in an ASP.NET web app:

var http = require('http');

“require” is the node.js equivalent of a using statement in C#. We import the package called “http” which includes the tools for handling HTTP calls. This package is part of the standard node library so we didn’t have to do anything special to import it.

http.createServer(function (req, res)

We use the http library to create a server. The createServer accepts a callback which in turn accepts parameters for the HTTP request – req – and HTTP response – res. The “req” parameter will allow us to access the different parts of an incoming HTTP request: the headers, the query, the URL etc.

.listen(port);

The listen(port) method of the http object will make sure that we’re listening to requests on the given port. The port is assigned from a default value. If that’s null then we go with 1337. Unsurprisingly we’d use port 80 for a public HTTP web server and 443 for HTTPS.

res.writeHead(200, { 'Content-Type': 'text/plain' });

res.end('Hello World\n');

The body of the callback function is very simple. It sets the response code to 200 OK and the content-type header to text/plain. Then we send a string back saying Hello World.

Let’s output some simple HTML instead. Change the callback function as follows:

res.writeHead(200, { 'Content-Type': 'text/html' });

var url = req.url;

res.end("<html><body><p>Requested URL: " + url + "</p></body></html>");

We read the URL of the request and put it in a paragraph. Run the project and you’ll see the following output:

Requested URL: /

Now extend the URL to e.g. http://localhost:1337/hello/bye and the output will be the following:

Requested URL: /hello/bye

Let’s read some other properties of “req”:

var url = req.url;

res.end("<html><body><p>Request properties: URL: " + url + ", method: " + req.method +

", http version: " + req.httpVersion + "</p></body></html>");

You’ll probably understand what those properties mean.

We can indicate in the header section that some resource was not found:

res.writeHead(404, { 'Content-Type': 'text/html' });

The 404 will be visible in the developer tools of your browser. Here’s the output in Chrome:

So you can see that we can read a lot of properties of the request and set the response accordingly. We could in theory use this simple template for a web service and handle all the GET, POST etc. requests based on the incoming URL in a gigantic if-else statement. However, that would be a bad idea. We’d like to build request handlers comparable to those in MVC.NET routes and controllers.

That’s where the Express.js library enters the scene. We’ll import it and at the same time discuss the Node Package Manager in the next post.

View all posts related to Node here.