Test Driven Development in .NET Part 2: continuing with Red, Green, Refactor

March 28, 2013 3 Comments

In the previous post we looked at the very basics of test first development in .NET and more specifically the Red-Green-Refactor cycle. This post is a direct continuation so we’ll build on the test project we started working on.

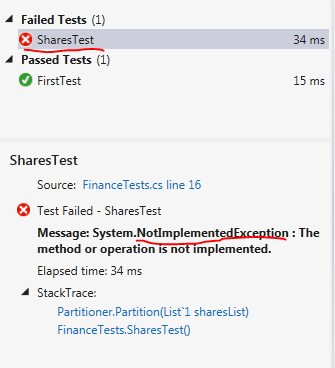

Pass the test

Currently we have a failing test in our test project: the SharesTest() fails. Remove the ‘throw new NotImplementedException’ from the Partition method and replace it with the simplest possible return statement that will make the compiler happy:

return new Partition();

Run the tests now and we should still have a failing test: the Size property was initialised to 0 which is different from the expected value of one:

It’s now time to make sure that our test passes. The simplest way is probably the following:

public Partition Partition(List<Share> sharesList)

{

return new Partition() { Size = sharesList.Count };

}

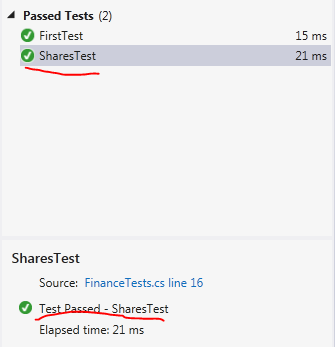

Run the test now and you should see that SharesTest indeed passes:

We assign the Count property of the sharesList parameter to the Size property of the Partition object. As we passed in a list with a single share then the result will be 1 which is the expected result. This is of course not a real life implementation yet. The Partition method doesn’t even look at the integer in the Partitioner constructor. That’s OK as we’ll now move on to the next step in the cycle: Refactoring.

The code generator called the integer parameter of the Partition constructor ‘p’. That’s not very descriptive so change the code as follows:

private int _partitionSize;

public Partitioner(int partitionSize)

{

this._partitionSize = partitionSize;

}

Re-run the test to make sure it’s working. This is a good exercise: you change something in the implementation and then run the tests to check whether you broke something.

We now know a bit more about the purpose of SharesTest: it tests whether a group of size one is partitioned into a Partition of size one. There are different ways to name a test but a descriptive approach can be worthwhile. Rename the SharesTest method to Partitioning_a_list_of_one_item_by_one_produces_a_partition_of_size_one().

Run the test and you’ll see the new method name appear in the Test Explorer. As the name describes what the test does it’s easy to see which functions pass or fail. SharesTest doesn’t tell you anything: what Shares? What functionality? It passes, but what is it that passes? Choosing a long name like that saves you a lot of time: the title tells you which functionality is broken.

We can again stop for some reflection: is it enough to test a single case? Should we check other partition values such as -1, 0, 2, 100 etc.? Testing the value of 1 is probably not enough as the users may pass in values that are outside of your control.

You may be tempted to add several assertions into one test but resist that: you should only have a single assertion within one test. We should always have one single assertion per unit test. We test one scenario and not more. Then if the test fails then you’ll immediately see which functionality is failing.

Insert a new test in FinanceTests, a test that checks if a collection of 2 items returns a Partition of size 2:

[Test]

public void Partitioning_a_list_of_two_items_by_one_produces_a_partition_of_size_two()

{

List<Share> sharesList = new List<Share>();

Share shareOne = new Share();

shareOne.Maximum = 100;

shareOne.Minimum = 13;

sharesList.Add(shareOne);

sharesList.Add(new Share() { Maximum = 50, Minimum = 10 });

Partitioner partitioner = new Partitioner(1);

Partition partition = partitioner.Partition(sharesList);

Assert.AreEqual(2, partition.Size);

}

We now have a collection of 2 shares. The collection is partitioned into groups of one and we expect the resulting Partition two have two elements. Run the tests and you’ll see that it passes. It’s obvious: our implementation of the Partition method still doesn’t even look at the _partitionSize property so it doesn’t make any difference whether we pass in a collection of 2, 5 or 100. So it’s now time to come up with something more realistic.

Add another property to the Partition object:

public IList<IList<Share>> PartitioningResult;

The result of the partitioning process should be a list of lists shares. If we start with a list of 10 shares which should be cut into two subgroups of 5 then we’ll end up with a list of lists where the individual subgroups have a size of 5. The Partition method might look like this:

public Partition Partition(List<Share> sharesList)

{

IList<IList<Share>> partitioningResult = new List<IList<Share>>();

int total = 0;

while (total < sharesList.Count)

{

List<Share> subGroup = sharesList.Skip(total).Take(_partitionSize).ToList();

partitioningResult.Add(subGroup);

total += _partitionSize;

}

return new Partition() { PartitioningResult = partitioningResult, Size = partitioningResult.Count };

}

Inspect the code and make sure you understand what it is doing. It is straightforward: it chops up the incoming sharesList parameter into subgroups using LINQ and assigns the subgroups to the Partition object along with a new definition of Size. Run the tests and you’ll see that it passes.

The next phase would be to decide what scenarios to test: what if we have a list of 5 shares and want to partition them into groups of two. Should the Partition function throw an exception? Should it return lists of 2-2-1? Or should it drop the element(s) that don’t fit the partition size? These are all questions that the domain expert should be able to answer so that you can write proper tests.

You can see now that a well written test suite will function as a list of specifications. You can of course have the specs listed in a Word document, but honestly, who has the time and energy to read and maintain that? How can you test the specifications in a Word document? If you instead write the tests directly in the Visual Studio editor those will never expire and with meaningful test method names will tell you clearly how the software is supposed to behave.

Test code quality

Test code is also an integral part of the solution so it should also be maintainable. You may think that the test project is less important than production code. The truth is that all important design rules, such as DRY (Don’t Repeat Yourself) still apply here. It needs to be well organised and documented so that you can find your way around when you come back to it to make changes.

As you add more and more test cases in our Finance test project you may be tempted to copy and paste the original Partitioning_a_list_of_one_item_by_one_produces_a_partition_of_size_one method, rename it and replace the parameters that are required Partitioner, Partition and Assert. Why would you copy and paste any bit of code? To save time: it boring to type out the List of shares variable that’s needed in every assertion.

It’s a better idea to go with a helper method:

private List<Share> CreateSharesListOfSize(int size)

{

List<Share> shares = new List<Share>();

for (int i = 0; i < size; i++)

{

shares.Add(new Share(){Maximum = 130, Minimum = 15};

}

return shares;

}

The refactored test methods will look like this:

[Test]

public void Partitioning_a_list_of_one_item_by_one_produces_a_partition_of_size_one()

{

List<Share> sharesList = CreateSharesListOfSize(1);

Partitioner partitioner = new Partitioner(1);

Partition partition = partitioner.Partition(sharesList);

Assert.AreEqual(1, partition.Size);

}

[Test]

public void Partitioning_a_list_of_two_items_by_one_produces_a_partition_of_size_two()

{

List<Share> sharesList = CreateSharesListOfSize(2);

Partitioner partitioner = new Partitioner(1);

Partition partition = partitioner.Partition(sharesList);

Assert.AreEqual(2, partition.Size);

}

Additional considerations of tests

Besides being maintainable tests should be:

- Repeatable

- Independent

- Test only public members

- Atomic

- Deterministic

- Fast

A repeatable test means that if a test fails then it should always fail. We can’t say that a test fails between 10am and 5pm, otherwise there’s some external Date function that the test has no control of. Make sure that all those dependencies are controlled by the test method to avoid any such constraints.

Independent tests are tests that can be run in any order without affecting the pass/fail result. There should be no specification saying that test A must be run before test B for it to give the correct result. Don’t make a test dependent on the state left over by another test. Every test should have all necessary dependencies at their disposal and should start with a clean slate.

Testing public members only puts us in the shoes of the client, i.e. the consumer of the API. While writing the initial tests you are forced to think through the design of the API: what objects and methods are needed, what should we call them, what parameters should they take etc. A client is ultimately interested in the public design of the API not in the private elements which they do not even have visibility of. In addition, by testing public members only we can concentrate on the business rules and leave unimportant internal implementation details alone. An unimportant implementation detail is e.g. the assignment of the private variable in the Partitioner constructor. Do we really need to test if the Partitioner’s private integer field was assigned the value of the incoming parameter? Not really, it’s so trivial and it’s an internal implementation detail.

Atomic means that a unit test tests only one thing at a time, meaning you will have only one Assert statement within the body of the unit test.

A deterministic unit test is one that always provides one affirmative outcome: either pass or fail with 100% certainty, “maybe” and “almost” are not good enough.

You can guess what “fast” means. However, it’s not enough to say “yeah, it’s quite fast”. A good unit test runs VERY fast, we’re talking about 10-50 milliseconds. You should eliminate all factors that slow down the execution of the unit test. Accessing external resources such as web services, databases, physical files make unit test execution slower – and also less reliable as those resources must always be up and running and be in a state that is required by the code under test. We will look at such scenarios on later posts dealing with mocking dependencies.

How to organise the tests

There are certainly many ways to organise a test project. 10 developers may give you 11 different answers, but the following can work for many out there:

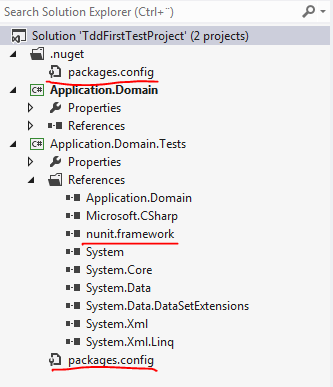

- Make sure to include your tests in a separate .NET project

- You should have as many test projects as you have ‘normal’ projects. Example: if your solution consists of Application.Web and Application.Domains then you should have two corresponding .NET test projects: Application.Web.Tests and Application.Domains.Tests

- One level down is the namespace, e.g. Finance. For every namespace you should have a Namespace_Test folder in the correct .NET test projects, Finance_Test in this example

- Below the namespace we have the Class, e.g. Share. For each class you should have a Class_Test folder, Share_Test in this example

- Within the Share_Test folder we’ll have our test class which tests the behaviour of the Share object

- Behaviour means the core business logic and making sure that unimportant internal implementation details are not tested. Those tests are not worth writing. E.g. testing a getter and setter is futile unless they incorporate important business logic, such as refusing certain values

So our little .NET solution might look like this after some renaming:

You may be asking why the test suite of the Partitioner class has such a strange name, When_partitioning_shares.cs. Besides the fact that it is what we test, i.e. partition a list of shares, check how the test class name and the individual test cases can be read in the Test explorer:

When partitioning shares, partitioning a list of two items by one produces a partition of size two. This sentence gives you the scenario and the expected outcome.

Keep test code DRY

The DRY, i.e. don’t repeat yourself principle applies to the test code as well. There will be parts in the code that all the test methods will need. In our example a list of shares is created in Partitioning_a_list_of_one_item_by_one_produces_a_partition_of_size_one() and Partitioning_a_list_of_two_items_by_one_produces_a_partition_of_size_two(). Remove these two methods and instead add the following two:

[Test]

public void Partitioning_a_list_of_four_items_by_one_produces_a_partition_of_size_four()

{

List<Share> sharesList = CreateSharesListOfSize(4);

Partitioner partitioner = new Partitioner(1);

Partition partition = partitioner.Partition(sharesList);

Assert.AreEqual(4, partition.Size);

}

[Test]

public void Partitioning_a_list_of_four_items_by_four_produces_a_partition_of_size_one()

{

List<Share> sharesList = CreateSharesListOfSize(4);

Partitioner partitioner = new Partitioner(4);

Partition partition = partitioner.Partition(sharesList);

Assert.AreEqual(1, partition.Size);

}

[Test]

public void Partitioning_a_list_of_four_items_by_two_produces_a_partition_of_size_two()

{

List<Share> sharesList = CreateSharesListOfSize(4);

Partitioner partitioner = new Partitioner(2);

Partition partition = partitioner.Partition(sharesList);

Assert.AreEqual(2, partition.Size);

}

i.e. we run two tests on a list of 4 shares in all 3 cases. The code that builds the the list is repeated in every test method. As it turns out NUnit – and in fact all major test frameworks out there – makes it easy to run a piece of code before every test method is run in the test suite. This “pre-test” code must be decorated with the [SetUp] attribute. Update the test suite to the following:

[TestFixture]

public class When_partitioning_shares

{

List<Share> _sharesList;

[SetUp]

public void SetupTest()

{

_sharesList = CreateSharesListOfSize(4);

}

[Test]

public void Partitioning_a_list_of_four_items_by_one_produces_a_partition_of_size_four()

{

Partitioner partitioner = new Partitioner(1);

Partition partition = partitioner.Partition(_sharesList);

Assert.AreEqual(4, partition.Size);

}

[Test]

public void Partitioning_a_list_of_four_items_by_four_produces_a_partition_of_size_one()

{

Partitioner partitioner = new Partitioner(4);

Partition partition = partitioner.Partition(_sharesList);

Assert.AreEqual(1, partition.Size);

}

[Test]

public void Partitioning_a_list_of_four_items_by_two_produces_a_partition_of_size_two()

{

Partitioner partitioner = new Partitioner(2);

Partition partition = partitioner.Partition(_sharesList);

Assert.AreEqual(2, partition.Size);

}

private List<Share> CreateSharesListOfSize(int size)

{

List<Share> shares = new List<Share>();

for (int i = 0; i < size; i++)

{

shares.Add(new Share(){Maximum = 130, Minimum = 15});

}

return shares;

}

}

The SetupTest method will be run before every other test method in the file. It simply assigns a value of four shares to the private _sharesList variable.

Conversely if you decorate your method with the TearDown attribute it will be run AFTER each test method execution. The TearDown method can be used to reset some state to an initial value or clean up resources.

It’s quite tedious to create all these test cases, right? It would be best to create one test method and somehow run the same method using many different input parameters without having to copy-paste the existing code. It is possible using the TestFixture attribute. How it is done will be the topic of the next post – amongst several other features.