Introduction to Amazon Code Pipeline with Java part 6: third party action overview

May 1, 2016 Leave a comment

Introduction

In the previous post we looked at the most important keywords related to AWS CodePipeline. A pipeline is a workflow of stages that together describe the delivery process for a piece of software. This piece of software is called an artifact and goes through various revisions as it is passed from one stage to another. Each stage can consist of one or more actions. An action is a task performed on an artifact. If all actions in a stage have completed without a failure then the stage transitions into the next stage in the pipeline. It’s possible to disable the transition e.g. if it’s necessary to enforce a manual activation.

In this post we’ll start looking at a global overview for third party action development.

Third party actions

Third party actions are somewhat like the custom plugins developed for Jenkins and TeamCity. An example for a third party action is the Apica Loadtest test action we saw before. Anyone can develop a third party action but it needs to go through an approval process within AWS. It is reviewed and tested rigorously before it’s published on the CodePipeline console. In general it’s a more complex and lengthy process to publish a third party action in AWS than it is to enlist your custom plugin for TeamCity and Jenkins.

As noted before there are 4 types of action:

- Source action, e.g. a GitHub or AWS S3 checkout

- Build action to build the source

- Deploy action to deploy the artifact to one or more servers

- Test action to run tests on an artifact

If our custom third party action is approved in AWS it will be available for all CodePipeline customers. E.g. if a CP user is interested in adding an automated load test to his or her pipeline then they can select the Apica Loadtest third party test action.

Custom job agent

A key component that third party action developers will need to work on is the job agent. A job agent is a process that continuously monitors a CodePipeline endpoint where it can poll for new jobs periodically, e.g. once every 10 seconds. E.g. if the pipeline has reached the Apica Loadtest action then our job agent will be able to pull that job and process it. It’s important to keep in mind that the job agent polls CP for new jobs. CP doesn’t push signals about a new job to an agent, it’s the way around. It’s perfectly fine to have 2 or more agents to poll the same CP endpoint for new jobs so that the total load is distributed across multiple agents.

In addition with some careful coding a job agent can be made deploy-proof. I mean that a new job agent application itself can be deployed even during an ongoing job execution and the freshly deployed agent will be able to pick up the job that’s being executed. There’s no requirement for a database to enable this scenario. We’ll eventually come to code examples in this series and then you’ll see an example for this.

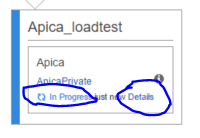

The job agent keeps communicating with CP during the job execution and pushes signals about the state of the job. When the job finishes then the agent can push a SUCCESS or FAILURE status so that the end user knows about the outcome. At the time of writing this series it’s not possible to push anything complex to the CP GUI about the state of the job, e.g. build a graph or show custom messages. All of that needs to be implemented externally. As an example I can show how an ongoing load test is represented in an action in CP:

The “Details” link is a link to an external website at our company that shows a lot of details about the ongoing load test, such as response times, transaction rates, a large number of graphs, url failures etc. None of that can be pushed as status messages to CP. If you want to inform your users about any kind of job details then you’ll need to provide this link to the test action. Again, we’ll see how to do that but a number of links associated with the custom test action must be provided during the AWS approval process. They cannot be set to just any type of link by the job agent during job execution.

So what type of application is this job agent? There are multiple solutions here of course, but the application needs to be able to run long running processes. Our job agent is a Java Maven web application that starts a daemon thread upon startup which performs all the polling and job execution tasks. It is compiled into a .war file which in turn is deployed on an Amazon Elastic Beanstalk server like here:

Here’s the war file deployed to the codepipelinejobagent application:

You may wonder why we have a web application to start a long running process. The reason is that the job agent also has a simple HTML status page that can be reached by our DEVOPS team to monitor the status of the job agent. However, that’s only an implementation detail. The exact technology you use for the job agent can vary. However, it must support long running processes and must be able to communicate with AWS using HTTP. Also the development language of choice must be supported by the AWS SDK and that is published in many different languages, like Java, C#, PHP, Python etc. you name it. If you want you can go for a Windows service or its Linux equivalent, it doesn’t matter. In this series I’ll stick to Java though.

We’ll continue with the job agent communication process overview in the next part.

View all posts related to Amazon Web Services and Big Data here.