Web optimisation: resource bundling and minification in .NET4.5 MVC4 with C#

January 14, 2013 2 Comments

We will look at the bundling and minification techniques available in a .NET4.5 MVC4 project. Bundling and minification help optimise a web page by reducing the number of requests sent to the server and the size of the downloads. I assume most readers will know what the terms mean, but here comes a short explanation just in case.

Bundling: if your websites requires resource files, typically CSS and JavaScript files then the browser will have to request them from the server. Ideally these resources should be bundled so that the browser can receive the external files in a lower number of requests: all CSS content will be copied to one single CSS file – a CSS bundle – and the same can be made to the JS files creating a JS bundle.

Minification: it is obvious that larger external files take longer to download. CSS and JS files can usually be made smaller by removing comments, white space, making variable names shorter etc.

The two techniques applied together will decrease the page load time making your readers happy.

These two web optimisation techniques are well-known in the web developing world but they are generally cumbersome to carry out manually. Many programmers simply omit them due to time constraints. However, MVC4 in .NET4.5 has made it extremely straightforward to perform bundling and minification so there should be no more excuses.

For the demo project start Visual Studio 2012 and create an MVC4 internet application with .NET4.5 as the underlying framework. Navigate to Index.cshtml of the Home view and reduce its contents to the following:

@{

ViewBag.Title = "Home Page";

}

<h2>Bundling and minification</h2>

<div>Welcome to bundling and minification.</div>

If you go to the Contents folder you’ll see a default CSS file called Site.css. Add two more CSS files to the folder to simulate tweaking styles: mystyle.css and evenmorestyle.css. For the sake of simplicity I simply copied the contents of Site.css over to the two new files but feel free to fill them with any other css content. Remember, we only pretend that we need 3 style sheets to render our website, we will not really need them.

If you take a look at the Scripts folder it includes a lot of default scripts: jQuery, knockout, modernizr. We will pretend that our site needs most of them.

Locate _Layout.cshtml in the Views/Shared folder. Note that the _Layout view will already include some default bundling and minification features but we’ll start from scratch to make the transition from no optimisation to more optimisation more obvious. So update the contents of _Layout.cshtml to the following – note that you can drag and drop the CSS and JS files onto the editor, VS will create the ‘link’ and ‘script’ tags for you:

<!DOCTYPE html>

<html lang="en">

<head>

<title>@ViewBag.Title - My ASP.NET MVC Application</title>

<link href="~/Content/Site.css" rel="stylesheet" />

<link href="~/Content/mystyle.css" rel="stylesheet" />

<link href="~/Content/evenmorestyle.css" rel="stylesheet" />

<script src="~/Scripts/modernizr-2.5.3.js"></script>

</head>

<body>

<div>

@RenderBody()

</div>

<script src="~/Scripts/jquery-1.8.3.js"></script>

<script src="~/Scripts/jquery-ui-1.9.2.js"></script>

<script src="~/Scripts/jquery.unobtrusive-ajax.js"></script>

<script src="~/Scripts/jquery.validate.js"></script>

<script src="~/Scripts/jquery.validate.unobtrusive.js"></script>

<script src="~/Scripts/knockout-2.1.0.js"></script>

</body>

</html>

As you see our page needs a lot of external files. You may be wondering why modernizr.js is included separately from the other JS files. The reason is because modernizr.js makes non-HTML5 compatible browsers “understand” the new tags available in HTML5 such as “section” or “aside”. Therefore it needs to be loaded before the HTML appears otherwise its effects will not be seen on the rendered HTML.

You will probably know why JS files are included at the bottom of HTML code: if they come first then the browser will not start rendering the HTML until the JS files have been downloaded. By downloading them as late as possible we can increase the perceived response time. The version numbers of the jQuery files may not be the same as in your solution; I ran an update-package command in the package manager to have the latest version of each. It does not really matter for our discussion though so it’s OK to include the files that Visual Studio inserted by default.

Run the application in Internet Explorer and check the HTML source:

No surprises here: there is no bundling or minification going on here.

While IE is still open press F12 to turn on the developer tools. Go to the Network tab:

Clear the browser cache in Internet Options to simulate a user who comes to our site for the first time, press “Start capturing” in web developer tools – marked with an underline above – and refresh the page. The developer tool will capture the rendering time of each component of the home page. You may see something like the following:

The response times on your screen may of course differ but the point is that each resource stands on its own, they are not bundled or minified in any way. Of course some will be downloaded in parallel by the browser – the exact number of parallel connections will depend on the browser type. This number is 6 in IE8 and higher. However, we can do a lot better. Click ‘Go to detailed view’ and select the Timings tab to see the total rendering time. It may look something like this:

The total rendering time in my case was 0.82 sec.

If you go back to the summary view of the developer tools you’ll see in the bottom of the page that the total size of the downloaded content was about 0.9MB.

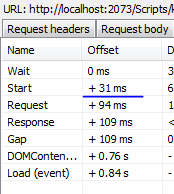

Our goal is to reduce both the rendering time and the size of the resources to be downloaded. Due to the limited number of parallel requests if you have a lot of resources on your page then some of them will need to wait for other resources to be downloaded. This further increases the response time. If you highlight knockout.js in the web developer tools output and then go to the detailed view, select the Timings tab you’ll see a column called ‘offset’. These values will tell you how much time has passed since the original request:

In my case knockout.js started to download 31ms after the original request.

The tools needed to optimise our page are included in the References folder: System.Web.Optimization and WebGrease. WebGrease is a low level command line utility that the framework uses under the hood to carry out the minification and bundling of our external resources.

Locate Global.asax.cs and check the code that’s found there by default. You will see a call to BundleConfig.RegisterBundles. Select ‘RegisterBundles’ and press F12 to go to the source. This is the place where the BundleCollection object is filled with our bundles. As you can see the MVC4 internet template builds some of the bundling for us.

Each type of external resource will have its own BundleType: CSS -> StyleBundle, JS -> ScriptBundle. Both objects will take a string parameter in their constructor: a virtual path to the bundled resource. The constructor is followed by a call to the Include method where we pass in the real paths to the external files to be included in that bundle. Example:

bundles.Add(new StyleBundle("~/Content/themes/base/css").Include(

"~/Content/themes/base/jquery.ui.core.css",

"~/Content/themes/base/jquery.ui.resizable.css")

The bundle represented by the virtual path ‘/Content/themes/base/css’ will include 2 jQueryUi files. When the browser requests a resource with that path MVC will intercept the request, collect the contents of the files into one consolidated resource and sends that consolidated resource as response to the browser.

The Include() method is quite clever:

– it understands the * wildcard. Example: “~/Scripts/jquery.validate*” will include all resources within the Scrips folder that start with ‘jquery.validate’.

– it will not include redundant files. Example: you may have 3 files that start with jQuery. These are jQuery.js, jQuery.min.js and jQuery.vsdoc for VS intellisense. Include() will apply conventions to exclude the ‘min’ and ‘vsdoc’ files even if you specified with a ‘*’ charater that you want all files whose name starts with ‘jQuery’. Include() will only select the ‘normal’ jQuery.js file. This way we will avoid duplicated downloads.

So let’s bundle our CSS files first. You can erase the default code in the RegisterBundles call to start from scratch:

public static void RegisterBundles(BundleCollection bundles)

{

}

Knowing what we know now let’s try to construct a bundle of our 3 CSS files:

public static void RegisterBundles(BundleCollection bundles)

{

bundles.Add(new StyleBundle("~/bundles/css")

.Include("~/Content/Site.css", "~/Content/mystyle.css", "~/Content/evenmorestyle.css"));

}

Next up is our JS files. You may recall that modernizr.js stands on its own before any HTML is rendered so we will build a bundle for that resource as well. You may be wondering why we want to have a bundle with one file and the answer is that bundling also performs minification at the same time. We’ll see how that works in practice later in this post.

Extend the RegisterBundles call as follows:

public static void RegisterBundles(BundleCollection bundles)

{

bundles.Add(new StyleBundle("~/bundles/css")

.Include("~/Content/Site.css", "~/Content/mystyle.css", "~/Content/evenmorestyle.css"));

bundles.Add(new ScriptBundle("~/bundles/modernizr")

.Include("~/Scripts/modernizr-*"));

}

The ‘*’ wildcard will make sure that if we update this file to a newer version in the future the bundle will include that automatically. We don’t need to come back to this code and update the version number.

The last bundle of files we want to create is for the JS files in the bottom of the Index page. Extend the bundle registration as follows:

public static void RegisterBundles(BundleCollection bundles)

{

bundles.Add(new StyleBundle("~/bundles/css")

.Include("~/Content/Site.css", "~/Content/mystyle.css", "~/Content/evenmorestyle.css"));

bundles.Add(new ScriptBundle("~/bundles/modernizr")

.Include("~/Scripts/modernizr-2.5.3.js"));

bundles.Add(new ScriptBundle("~/bundles/js")

.Include("~/Scripts/jquery*", "~/Scripts/knockout-*"));

}

-> We want all files starting with ‘jquery’ and the knockout.js file irrespective of its version number. The Include method will usually be intelligent enough to figure out dependencies: jquery.js must come before jquery.ui is loaded and Include() will ‘know’ that. However, it’s not always perfect. You may run into situations where you must spell out each file in the bundle so that they are loaded in the correct order. So in case your js code does not work as expected this just might be the reason.

A note on virtual paths and relative references:

Generally you can use any path as the virtual path in the ScriptBundle/StyleBundle constructor, they don’t need to ist as real physical paths. However, be careful with stylesheets. As you know you can refer to images from within a stylesheet with relative URLs, e.g.:

background-image: url("images/mybackground.png");

The browser will request the image RELATIVE to where it found the stylesheet, i.e. at /images/mybackground.png. Now check the virtual path we provided in the StyleBundle constructor: “~/bundles/css”. This will translate to the following HTML link tag:

<link rel="stylesheet" href="~/bundles/css" />

When the browser sees that it needs to locate images/…png from this particular stylesheet it will think that the stylesheet came from a file called “css” in the directory “bundles”. Therefore it will make a request for “/bundles/images/…png” and that file will probably not exist. That particular request will not be intercepted and the server returns a 404. So to be on the safe side it’s a good idea to specify a virtual path that better corresponds to the real phsyical path to the css files. Update the following:

bundles.Add(new StyleBundle("~/bundles/css")

.Include("~/Content/Site.css", "~/Content/mystyle.css", "~/Content/evenmorestyle.css"));

to

bundles.Add(new StyleBundle("~/content/css")

.Include("~/Content/Site.css", "~/Content/mystyle.css", "~/Content/evenmorestyle.css"));

Now the browser will think the css file came from a file called “css” in the directory “content” and sends a request for “/content/images/…png” which will be the correct solution. You can still have e.g. ~/content/styles or ~/content/ohmygod as the virtual path but the folder name, i.e. ‘content’ in this case should correspond to where the css files are located.

Following the same reasoning if you know that any of your JS files makes a reference to other files using relative paths then you will need to update the virtual paths of the ScriptBundle object as well: “~/scripts/js”.

Let’s carry on: how do we render the script tags of those bundles?

This is very easy as Razor has built-in methods to achieve this:

@Styles.Render("~/content/css")

@Styles.Render("~/bundles/js")

MVC will take care of rendering the script tags. Another, more fine-grained way of doing this is to use the following in the HTML code:

<link href="@BundleTable.Bundles.ResolveBundleUrl("~/bundles/css")" rel="stylesheet" type="text/css" />

The optimisation framework will also add more information to the URL: a query string value that will avoid caching old versions of the bundle. If you update one of the files in the bundle then a new query string will be generated and the browser will have to fetch the new updated version instead of using the cached one.

Before we update our code we can test the following: start the application and extend the URL to send a request for one of the bundled resources using its virtual path, e.g. http://localhost:xxxx/bundles/js. You should see something like this:

It’s looking very much like minified JavaScript. I cannot be sure just by looking at the contents that it includes everything from the bundled resources but believe me it does.

So now we’re ready to update _Layout.cshtml:

<!DOCTYPE html>

<html lang="en">

<head>

<title>@ViewBag.Title - My ASP.NET MVC Application</title>

@Styles.Render("~/content/css")

@Scripts.Render("~/bundles/modernizr")

</head>

<body>

<div>

@RenderBody()

</div>

@Scripts.Render("~/bundles/js")

</body>

</html>

Run the application again, check the generated HTML code and you’ll see…:

…not quite what you expected, right? The bundled files still appear as individual files, so what’s going on? It turns out that the debug/release settings of your application will make bundling/minification behave differently. In debug mode bundling is ignored for easier debugging and we ran the application exactly in that mode. How do we know that? Check web.config and you’ll see the following tag under system.web:

<compilation debug="true" targetFramework="4.5" />

Change debug to false and re-run the application. The source code should look similar to this:

That looks better. The files have been bundled and the correct link and script tags have been created. Also note the query string mentioned before attached to the href and src values.

As a result we have 1 link tag instead of 3 and 2 script tags instead of 7 we started off with.

There is an alternative way to force bundling even if debug = true in web config. Go to BundleConfig.RegisterBundles and add the following code after the bundles.Add calls:

BundleTable.EnableOptimizations = true;

This will override debug = true in the web.config file and emit single script and link tags.

As mentioned before bundling also performs minification: you can verify this by requesting the bundled resource in the URL, i.e. by requesting http://localhost:xxxx/bundles/modernizr?v=jmdBhqkI3eMaPZJduAyIYBj7MpXrGd2ZqmHAOSNeYcg1

You should see a jumbled version of the original modernizr.js file.

Have we achieved any improvement in the response time?

Run the same test with the web developer tool as we performed in the beginning. You should definitely get a lower response time. Also check the number of resources and the total size of the resources that the browser needs to download. The number of requests is reduced as we have fewer resources to download and the total size of the resources is greatly reduced due to the minification process.

We achieved all this by very little work – at least compared to how you would have done all this manually.

Some of you may have CoffeeScript files in your web project. You probably would like to bundle and minify them as well. I’ll demonstrate in the next blog post exactly how to do that.

View the list of MVC and Web API related posts here.