Using Amazon DynamoDb for IP and co-ordinate based geo-location services part 6: uploading IPv4 range to DynamoDb

April 26, 2015 3 Comments

Introduction

In the previous post we successfully created a limited IPv4 range file ready to be uploaded to DynamoDb. We saw how the relevant bits were extracted from the reduced subset of the MaxMind CSV source file and how the DynamoDb-specific input file was created.

In this post we’ll see how to upload the source file to DynamoDb using the bulk insertion tools available there. We’ll only import our limited test data but the same steps apply for large data sets as well.

Table setup in DynamoDb

Log onto the AWS web console and navigate to DynamoDb. Create a new table with the following characteristics:

- Name: geo-ip-range-test

- Primary key type: hash and range

- Hash attribute name: “network_head” of type Number

- Range attribute name: “network_start_integer” of type Number

- (Click Continue to come to the indexes page)

- Select Index type “Local secondary index”: this will automatically add “network_head (Number)” as the index hash key

- As index range key insert “network_last_integer” of type Number

- The index name will be autocompleted to network_last_integer-index, that’s good enough

- Make sure that “All attributes” is selected in the Project attributes drop-down list

- Click “Add index to table”

- (Click continue)

- Specify read and write capacity units at 5. It’s important to note that the write throughput will need to increase a lot when you’re ready to import the full data set of approx. 10 million rows otherwise the insertion process will take a very long time. You can modify the write throughput to something large just for the import phase, e.g. 10000 and then reduce it back to 5 or even smaller as writes won’t occur often – if anytime at all. Similarly the read throughput will need to increase a lot for the real database otherwise the queries will easily run into exceptions. With a large data set and low read throughput the query may need to scan too many records and quickly exceed the existing read throughput limit. We’ve set the read throughput to 1000 for our live geo IP range table. The throughput of 5 for both reads and writes will be OK for our small demo data set but will surely be too low for a 10-million-row data table

- (Click continue)

- You can select to set up a basic alarm – this is not vital for this demo exercise but is very useful for production databases. I let you decide whether you want to be notified in case of a throughput limit breach

- Click continue to reach the Review pane and click Create

Wait for the table to reach status ACTIVE.

Importing the records

Click the “Export/Import” button in the menu:

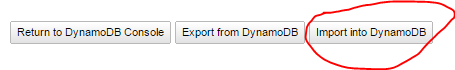

Select the ip range table and click to import into DynamoDb:

You’ll be directed to the “Create Import Table Data Pipeline” view. Select the input folder of the DynamoDb input JSON file we created previously. Provide an S3 log folder as well. These logs can provide important information in case the import process fails. You can set the throughput rate to 100% as no other process will need access to the IP range table during this time. Leave the execution timeout at 24 hours. You can set your email to send notifications to.

Make sure that the selected pipeline role has access to DynamoDb and S3. Click “Create import pipeline” and you’ll see the pipeline listed in the Import Pipelines column:

Refresh the screen using the green arrow icon in the top right hand corner. You should see that the EMR activity is “waiting for a runner”:

Be patient, it can take up to 15-20 minutes for the process to complete. We probably could have inserted the demo records one by one using code but I think this is a good exercise for the case of importing the full data set later.

If you have access to Amazon Data Pipeline then you can follow the process there directly:

After a while the process should be RUNNING:

The same view is available in DynamoDb:

Refresh the status periodically until the job finishes:

The same view looks like this in DynamoDb:

It’s a good sign that the pipeline state and EmrActivity status are both marked as FINISHED in green.

Go back to the tables list in DynamoDb and check the contents of geo-ip-range-test:

In the next post we’ll see how to query this table using the Java AWS SDK to find the geoname ID of a single IP address.

View all posts related to Amazon Web Services and Big Data here.

Why did you create the local secondary index? The query + filter you use later on doesn’t utilise it.

Unless I’m doing something wrong, the query can be quite inefficient and depending on the IP address you could be doing several pages of empty queries before finding a result.

To be honest I don’t remember anymore why the secondary index was created. We abandoned this project soon after I finished the documentation series. You might want to redo the process without adding the secondary index and see if it still works fine. //Andras

Turns out I was doing something wrong. I wasn’t sorting in descending order which was making it inefficient. The secondary index does nothing though and I’d recommend removing it from the article.