Web farms in .NET and IIS using Web Farm Framework 2.2 part 1: how to set up a web farm using WFF

July 4, 2013 16 Comments

So far in the series on web farms we’ve looked at two concrete solutions for load balancing: Network Load Balancing and Application Request Routing. Both are software based solutions that are relatively easy to use and get started with. However, there’s still a certain amount of manual work you need to perform: add new servers, install applications, install load balancers etc.

There are frameworks out there that can take the burden of manual installations and settings off of your shoulder. However, they can be quite complex with a steep learning curve. Also, as there is much automation involved it’s important to understand them inside out.

Microsoft provides two frameworks as mentioned in the first part of the series on web farms: the Web Farm Framework (WFF) and Windows Azure Services. As the title of this post suggests we’ll look at WFF a bit more closely. The latest version is WFF2.2 at the time of writing this post.

Note: if you are completely new to web farms in IIS and .NET then start here. Some concepts may be difficult to understand if you lack the basics.

General

WFF is a module add-on to IIS. WFF uses the Web Deploy module to update products and applications. Here you’ll find the homepage of WFF2.0 but we’ll concentrate on WFF2.2 which is the most up-to-date version at the time of writing this post. Some of the more interesting features include the following:

- Server provisioning: often called elastic scale, WFF keeps track of all product installations on the server so that you can provision new servers any time with almost no effort. The process takes a server with only the operating system installed and WFF brings it up to the same status as the existing web servers

- Application provisioning: WFF can perform application installations in each machine in the web farm one by one. It takes the server out of rotation, installs the components, brings the server back online, and then continues with the next server. It’s possible to roll out applications using Web Platform Installer or Web Deploy

- Content provisioning: WFF also manages application content provisioning and updates

- Load balancing support: WFF has built-in support for Application Request Routing (ARR) and third party load balancers so it can manage servers and sites for you

- Log consolidation and statistics: WFF offers status and trace logs from all servers in the web farm

- Extensibility: WFF is highly configurable and allows you to integrate your own applications and processes into WFF

- API: WFF can be accessed programmatically using the Microsoft.Web.Farm namespace. It can also be managed through PowerShell commands.

It’s important to stress that WFF is not a load balancer in itself but works in unison with load balancers. The default load balancer that WFF works with is ARR.

The disadvantage of WFF is that it introduces an extra point of failure: it depends on a single controller node. If that fails then WFF will be unable to monitor or manage the server farm until it is back online again. If you make a change to your primary server then that change won’t be propagated to the secondary servers. Your web farm will still continue to work, i.e. your clients will still get web responses from your website but WFF will not be able to synchronise between your server farm machines to keep them up to date. This shortcoming may be corrected in a future version. Read on to find out the role of each server type in a WFF setup.

The starting point

The plan is to start the investigation from scratch. I now have 4 clean machines at my disposal to test WFF: one controller machine, one primary server and two secondary servers. Every machine has Windows Server 2008 R2 and IIS7.5 installed and they all have a 64-bit architecture. There’s no website deployed on any of them yet. All machines sit within the same network so that they can communicate with each other. Don’t make your life harder by putting one machine in the cloud and the other in the local network of your company. Make the start as easy as possible.

The roles of each machine are as follows:

- Controller: the WFF controller server which is the controller of the web farm. This is the only machine that needs to have WFF installed. It monitors the status of the web farm machines, provides synchronisation, provisions applications, etc., it is a real workhorse

- Primary server: this is the blueprint machine for all web farm machines. The changes made on this machine will be propagated to the secondary servers.

- Secondary servers: the “slaves” of the primary server. They will be updated according to the changes on the primary server.

The secondary servers must be of the same architecture as the primary server, i.e. either 32 or 64 bit. You must have an administrator account to the machines.

Setting up the controller

So now I’ve logged on to the controller machine. Installing WFF is at present not as straightforward as I initially thought. I expected that the latest Web Platform Installer, which is in version 4.5 at this date, will be enough to be able to select WFF and install it with all the dependencies. However, WPI 4.5 won’t have that product listed at all.

Instead you’ll need to install v3 of the web platform installer available here. Install it and then download v2.2 of WFF from here. Select the correct package depending on the architecture on the next screen:

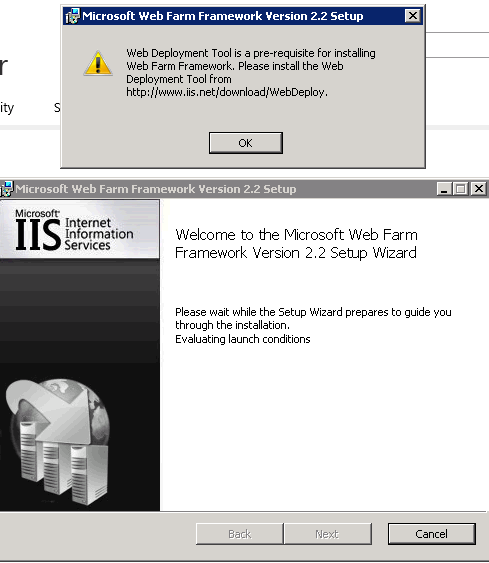

When the installation starts you may be greeted with the following message:

DON’T go to the link shown on the little popup. It will lead you to the Web Deploy v3 download page. It will also update your freshly acquired WPI v3 to v4.5, arghh! As it turns out we’ll need Web Deploy 2 instead.

You can download Web Deploy 2.0 from this link. Select the correct package according to the architecture of the machine and step through the very simple installer.

So now you should have the following platforms available among the applications on the controller machine:

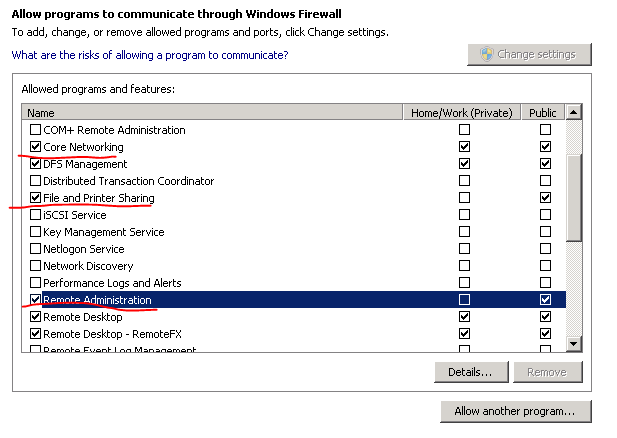

Now go back to the WFF 2.2 download page and try to install WFF again. Hopefully you’ll get the same message as me:

Yaaay! I don’t really understand why this process is so cumbersome. It’s as if Microsoft want to hide this product. If you happen to know the reason then feel free to comment.

The controller machine will have the main instance of WFF in the network. WFF will install a WFF agent on the web farm machines.

Setting up the secondary servers

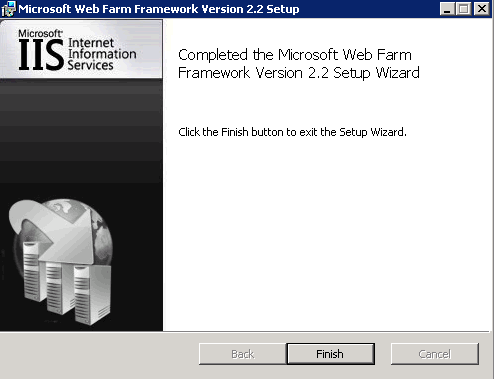

As mentioned above you should have an admin account to the servers. This account will be used when we create the web farm in a later step. We also need to make a couple of exceptions in the firewall. Open the Control Panel and select the System and Security group:

Then select the option marked below:

You’ll need to open up 3 channels. They are Core networking, File and printer sharing and Remote administration:

Do this on all secondary servers in the web farm.

Create a server farm

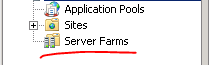

These steps should be performed on the controller server where we installed WFF 2.2. Open up the IIS manager and you should see a new node called Server Farms:

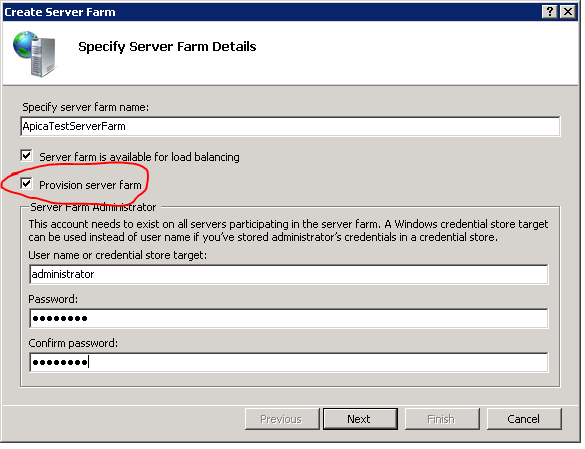

Right-click that node and select Create Server Farm… This will open the Create Server Farm window. The first step is to give the web farm some name and provide the administrator account. Don’t worry too much about the name, you can always change it later. Make sure to select the Provision server farm option:

The Server farm is available for load balancing option will set up Application Request Routing (ARR) as the load balancer. Uncheck this textbox if you have a different load balancing option in place.

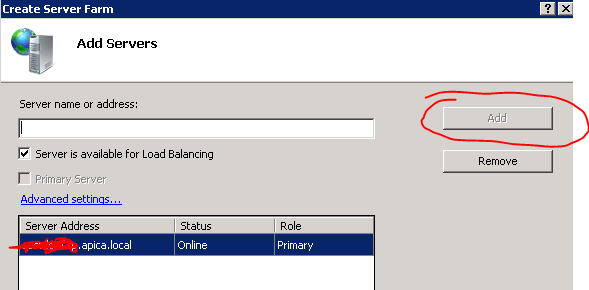

Click next to go to the Add Servers window. Here you can add all the servers participating in the server farm. I’ll first add the primary server so I enter the primary server machine name, check the “Primary Server” checkbox and click add. When you click add then the wizard will attempt to connect to the server using the admin account you’ve provided. Upon successful setup the primary server should be added to the list of servers:

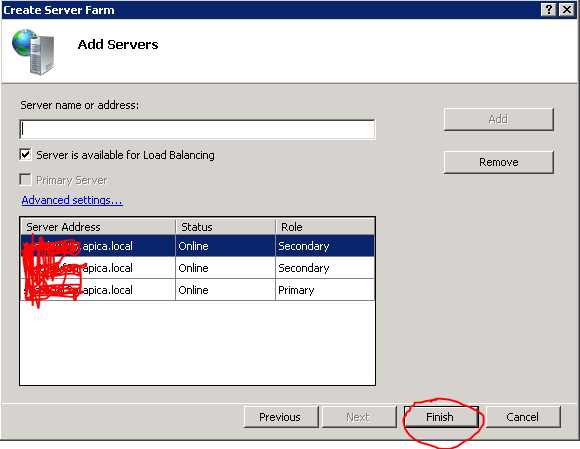

Then add the secondary servers the same way. If there’s a communication error then you’ll see an error message: maybe the machine name is not spelled correctly, or there’s a firewall problem. At the end of the process I have 3 machines: 1 primary and 2 secondary:

Click finish and there you are, you’ve just set up a web farm using WFF! Open the Server Farms node and you’ll see the farm you’ve just created.

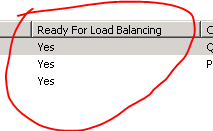

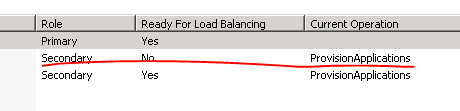

There are a couple of things going on right after the setup process. WFF will install the WFF agents on the primary and secondary servers and it will also synchronise all content, applications, configurations etc. between the primary and secondary servers. Select the Servers node under the farm you’ve just created. This will open up the Servers section in the middle of the screen. At first you may see No under Ready for load balancing:

Here it already says yes as I forgot to save the picture just after the farm creation, but it should say no at first. Look at the bottom half of the screen. You will see that WFF starts the synchornisation process right away:

After a couple of minutes the ‘no’ should go over to a ‘yes’.

Testing the primary server sync

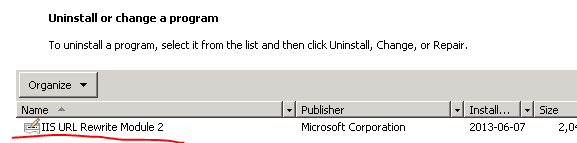

Let’s now test if an application is copied from the primary server to the secondary ones. Log onto the primary server and download WPI v3 like we did above. Using Web PI install Url Rewrite 2.0. Go back to the controller machine and watch the status of each web farm member. You’ll see that each is temporarily removed from the network while updating the member machine:

The machine is brought back online and then it’s the second machine’s turn to be updated. I’ll now log onto the secondary machines to see if the update really happened, and it actually did:

So far so good!

Adding and removing servers are straightforward operations. Just select the following links in the Actions pane on the right:

You can see the WFF trace messages in the bottom half of the screen. I believe it’s quite straightforward what you can do here: pause, resume, clear and filter the messages. A maximum of 1000 messages are saved in the trace queue. When this size has been reached the message at the top of the queue is removed in favour of the most recent message. Check out the column headers of the trace messages, they are easy to understand.

I’m not sure why that had to be so cumbersome either. It took me a while to wend my way through the different version dependencies also.

But, I have it working with two controllers under NLB that point to the same farm so that there is no one point of failure. Once you have that set up you can create additional farms or scale the existing farm out with additional server instances very easily. And you can drop out any single server instance without down time for your applications.

I haven’t implemented it yet, but I have outlined a custom load balanced state store using Redis to run on the controllers. So state requests from the farm would be made back to the NLB address of the controllers, and routed to the state service there. That’s the last single point of failure to eliminate. It should be relatively fast as well.

I hope that Micorosoft follows up on the product and makes it a bit more robust. That’s a missing piece that doesn’t seem to get the attention it should.

Thank you very much!!! It finally helps. That’s unbelievable what MS users must do!! A nice example why Microsoft will end.

Andres, thank you for doing this write up it was help in many areas. I have one major problem that I cant get over and its really frustrating and I need it fixed ASAP.

My Setup:

I created an internal DNS record called staging.mydomain.ad that is pointing to the controller server (this is based on info i found online). My controller server is running WFF 2.2 and ARR 3.0 with the default URL Rewrite rules (I have played with them but no positive outcome).

a. Controller Server running WFF and ARR – (Just call it CorpController) (Not part of the farm)

b. Primary Web Farm Server – (Just call it CorpPri)

c. Secondary Web Farm Sever – (Just call it CorpSec)

As stupid as this may sound i am not understanding a couple of major things…

1. Does the web sites that the users access reside ONLY on the CorpPri and CorpSec (via WFF replication). What i mean is do you have to create the site for some reason on the Controller server?

2. I think my main issue might relate to not understanding URL rewrite. Our Production web server has about 6 separate sites on it and they all have specific ports assigned. (one site would have 8084 (HTTP) and another one would have 8085 (HTTPS) etc) how would that look or work?

I am beyond frustrated with this and nothing i find covers these steps clearly or in depth. (not even youtube land)

Any help would be greatly appreciated!

Hello Robert,

Q1: It is definitely not required to deploy the web site on the controller, only on the web servers, both primary + secondary. The ARR machine must have a proxy web app, that’s it

Q2: I don’t have a definite answer for you for exactly that scenario unfortunately. Are all 6 sites load-balanced using the same ARR machine? Are they all part of a webfarm controlled by the CorpController?

//Andras

Thank you for the reply.

Q1: what do you mean by a proxy web app?

Q2: I currently only have 1 site setup on the server until i get it working. When you say “are all sites load-balanced using the same ARR machine”, yes (i think this is what you mean) the Web Farm was created on the controller server and the primary and secondary web servers are part of the Web Farm.

I really think its some simple thing that I am over-thinking. Could you give me a good example of what the URL rewrite should look like based on the info i included?

recap of setup:

Controller Server with WFF and ARR with one primary and one secondary web farm servers. I have the default URL rewrite rules in place (for the sake of being detailed) at the root level of the Controller server.

current URL Rewrite info:

“Matches the Pattern” – “Wildcards”

Pattern “*”

Action

“Route to Server Farm”

“http://” “staging.mydomain.ad” “/{R:0}”

thank you for your help,

Robert

Robert,

Have you gone through all posts in this series? The last part takes up a basic scenario with WFF: one website, one controller, one primary and two secondary servers.

You’ll find a reference to the proxy website:

“Log onto the controller machine and open the IIS manager. Delete the Default Web Site. Create a new site called arrbase pointing to a blank folder located anywhere you like on c:\. Leave the IP and port values untouched and the Host name field blank:”

You’ll also see how to turn on URL rewrite in ARR:

“The next step is to set up URL rewrite using ARR. Select the name of the server farm and click the Routing Rules icon:”

Have you gone through those steps? I didn’t have to specify any URL rewrite pattern.

//Andras

Andres,

Re-Reading the config I found an issue that resolved the problem of the site not working with a basic URL Rewrite setup. Thank you!

Now on to the other problems…

1. How do I setup a URL Rewrite to point users to a specific port? Example I have a site URL that is http://staging.mydomain.ad:8084 What would the URL Rewrite rule look like for this?

Thank you again!!

Robert,

I’m glad it works for the basic setup.

The same thing should work for the specific port variant. As you probably know the default port is 80, so http://www.mysite.com is the same as http://www.mysite.com:80, there shouldn’t be any difference if you assign a different port. Just make sure that you set up port 8084 in the bindings on IIS on each machine in the cluster, i.e. the primary and secondary servers.

Now that you have a setup that works with port 80 you can test the same setup and assign port 8084 in the Site Bindings section in IIS.

//Andras

So, Im confused. According to this https://twitter.com/JustinCouto/status/265596131717836800 WFF is dead. Is it? I want to use it but mainly because I am looking for an easy way to automate servers being pulled from ARR and updated then put back. Does anyone know an easy way to automate that outside of WFF? My current setup is an NLB cluster of ARR servers and then multiple web server farms behind them. Its a pain to manually go into each web farm and drain a server, pull it, update it, put it back and then continue with the next server.

Thanks.

I don’t know of any easy replacement but continuous integration tools such as TeamCity can run deployment scripts that carry out what you need: stop the server, deploy the package and start the server again.

//Andras

Thanks. Yeah, I was looking for more of a way to manage the OS level windows updates. I guess it will have to be a custom powershell script. I was able to find some info on scripting the ARR servers to make them unavailable gracefully.

I wish microsoft would just open source these types of tools when they decide to pull the plug on them.

Andres,

thanks for the help!

I have gotten past most of the hurdles but i am concerned/puzzled by the Check box “Provision server farm” that is in the wizard when you create a new farm?

Heres my issue with this setting. I have 7 “server farms” running on my WFF server and I am concerned about it corrupting the primary and secondary web servers if I check this for all the server farms. I had the farm completely setup and i was noticing that each farm would poll the web servers to update the content etc. i would see this as an issue if all 7 are trying to do this.

have you ever setup a WFF system with multiple server farm sites?

I also noticed that if you create a server farm and dont check the box “Provision server farm” the web servers never say ready for load balancing? but it still syncs all content for the sites.

I wish MS would just create something that is intuitive or at least provide REAL documentation like every other company out there. its so frustrating.

After I redid my setup i have the following farm setup:

1. NLB pointing to two ARR servers

2. Two ARR servers running with shared config for failure and load balancing

3. One controller server running WFF with the above mentioned 7 farm sites

4. One primary server

5. 3 secondary servers

6. session state running from the controller server

Hi Robert,

No, I haven’t actually gone any further with WFF than what you see in the blog. My employer decided to move on with another technology and now even “my” test web farm is gone.

//Andras

We’re looking towards implementing such a setup (finally) but some of the comments above have me a little worried..i.e. what’s this about Web Farm Framework being “dead”? and then if your employer is no longer using it, what are you using instead? Are we doing ourselves harm if we go the Web Farm Framework route?

Paul,

I was originally tasked to explore WFF by the previous project leader and this blog series is the result of that research. Then the team was reorganised and the new team leader never actually considered using WFF for anything. Instead we use a product called StingRay for loadbalancing and TeamCity for automatic builds and content replication across servers.

I’m not sure if WFF is dead, I cannot find any official announcement on the web from MS. In fact setting up WFF wasn’t a straightforward task in the first place due to the lack of clear step-by-step guidelines. Also, I first tried installing WFF using a more recent platform installer than what you see in this post, but I never managed as the building blocks were no longer listed. These might be indicative of MS silently dropping WFF but that’s only speculation from my side.

I think you can still use WFF but the level of updates and support might converge towards 0.

//Andras

Gotcha. Thanks for the information. One would think there’d be an easier solution for web farm syncing…everything I’ve come across seems to require multiple things to get it all working (i.e. web deploy and powershell, or iis shared config + robocopy, etc.) Was hoping WFF was this single solution, more or less, but if it’s not being developed further..not so sure we want to go that route. anyway, thanks for the information.